Cursor has rolled out a reinforcement learning-based self-summarization method for its Composer model, delivering a measurable accuracy boost on complex coding tasks while cutting token consumption to roughly one-fifth of full-context processing.

Self-Summarization Outperforms Compaction Across CursorBench

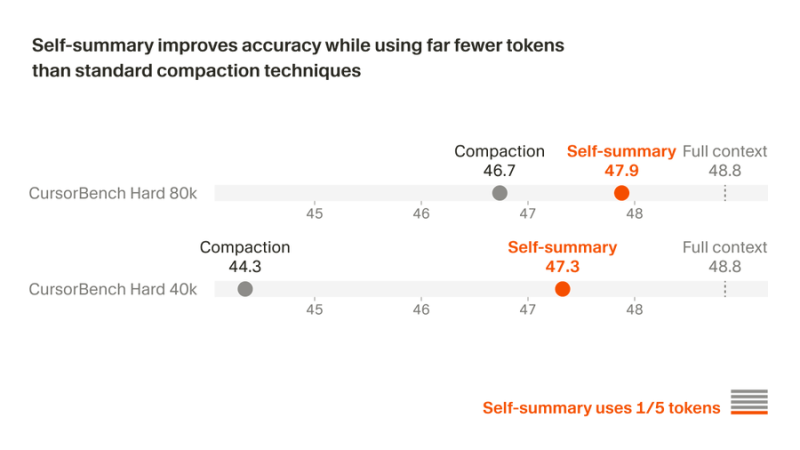

Traditional prompt-based compaction has been the default workaround for context limits in long coding sessions, but Cursor's internal benchmarks show the gap is real and consistent. On CursorBench Hard 80k, the self-summary method scores 47.9 against compaction's 46.7, while Cursor benchmarks GPT-5.4 coding efficiency with a 60 score on CursorBench - a reference point that helps frame how quickly these numbers are moving. On the 40k variant, the advantage widens further: self-summary at 47.3 versus compaction's 44.3.

What makes this meaningful is not just the score delta, but the mechanism behind it. The model is trained via reinforcement learning to summarize its own context dynamically, rather than relying on static prompt truncation. That means it can keep operating when context ceilings are hit without losing track of where it left off.

RL Optimization Targets Real-World Coding Efficiency

The efficiency case is just as strong. Running at around one-fifth the token load of full-context processing, self-summarization brings Cursor closer to a sustainable architecture for long-horizon agentic tasks - the kind that involve not just writing code, but navigating entire codebases across many steps. This is consistent with Cursor AI agents now handling 30% of merged pull requests inside the company, a figure that reflects how these efficiency gains are showing up in actual production workflows.

The broader implication is straightforward: post-training optimization through reinforcement learning is becoming a practical lever for improving both accuracy and cost in AI coding systems. As agents are deployed on increasingly complex, multi-step tasks, context management becomes a core engineering challenge, not an afterthought. Cursor's approach treats it as one.

Saad Ullah

Saad Ullah

Saad Ullah

Saad Ullah