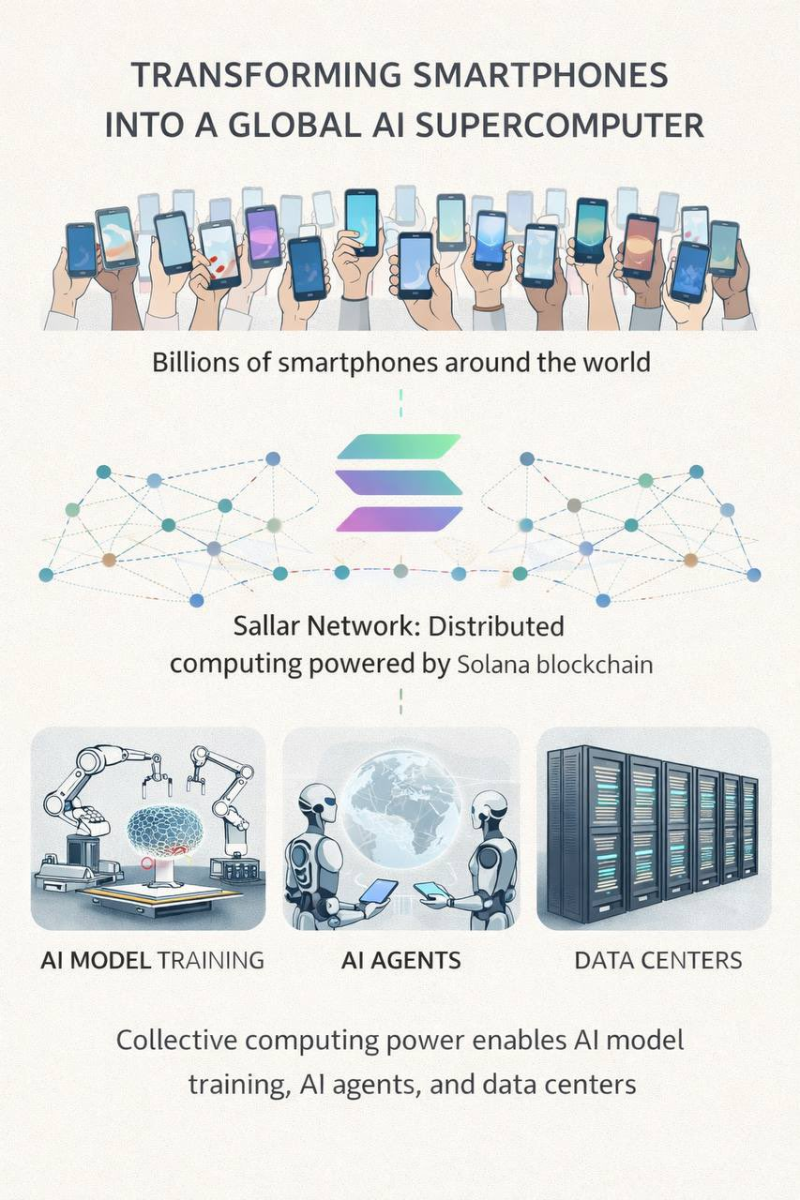

What if the most powerful AI computer on Earth was already in everyone's pocket? That is the premise behind Sallar, a decentralized physical infrastructure network (DePIN) built on the Solana (SOL) blockchain. The project proposes linking billions of smartphones into a unified computing layer capable of handling serious AI workloads, without a single data center in sight.

How Sallar Turns Your Phone Into an AI Node

Modern smartphones pack surprisingly capable CPUs, GPUs, and memory alongside always-on internet connections. Sallar taps into that dormant capacity, routing tasks such as AI model training, data analysis, cryptography, and scientific simulations across a global mesh of participating devices. Contributors earn $ALL tokens in return, effectively monetizing hardware that would otherwise sit idle. Early deployments already show thousands of connected devices feeding processing power into the network.

6 Billion Devices and the Future of Distributed AI Compute

The numbers are striking. There are currently more than 6 billion smartphones in active use worldwide. Even a modest fraction opting into Sallar could assemble one of the largest computing systems ever built, addressing the single biggest bottleneck in modern AI development: raw GPU capacity. The broader push toward edge-based AI infrastructure is already visible elsewhere. Elon Musk Hints at Starlink AI Phone Device Optimized for Neural Networks With Maximum Performance per Watt, exploring how next-generation mobile hardware could dramatically improve AI efficiency at the device level.

The DePIN model behind Sallar distributes infrastructure ownership among participants rather than concentrating it within a handful of corporations. That shift matters as AI compute demand continues to surge. Musk Unveils Plan for 100 GW Satellite-Based AI Compute Network, a vision that points to the same conclusion: the next generation of AI infrastructure will span satellites, edge devices, and decentralized networks rather than traditional cloud farms alone.

Sallar's approach may still face real challenges around latency, coordination overhead, and device availability. But the underlying logic is hard to dismiss. Billions of underutilized processors, already distributed across the globe, represent a computing resource that no single company could afford to build from scratch.

Marina Lyubimova

Marina Lyubimova

Marina Lyubimova

Marina Lyubimova