⬤ Researchers from Meta Superintelligence Labs and Yale University published a study examining how reasoning-based LLM judges influence reinforcement learning alignment. The paper, titled "Examining Reasoning LLMs-as-Judges in Non-Verifiable LLM Post-Training," analyzes evaluation models in environments where output correctness cannot be directly verified. The work finds that reasoning judges trained with a gold-standard judge can reduce reward hacking, though the problem is not fully eliminated.

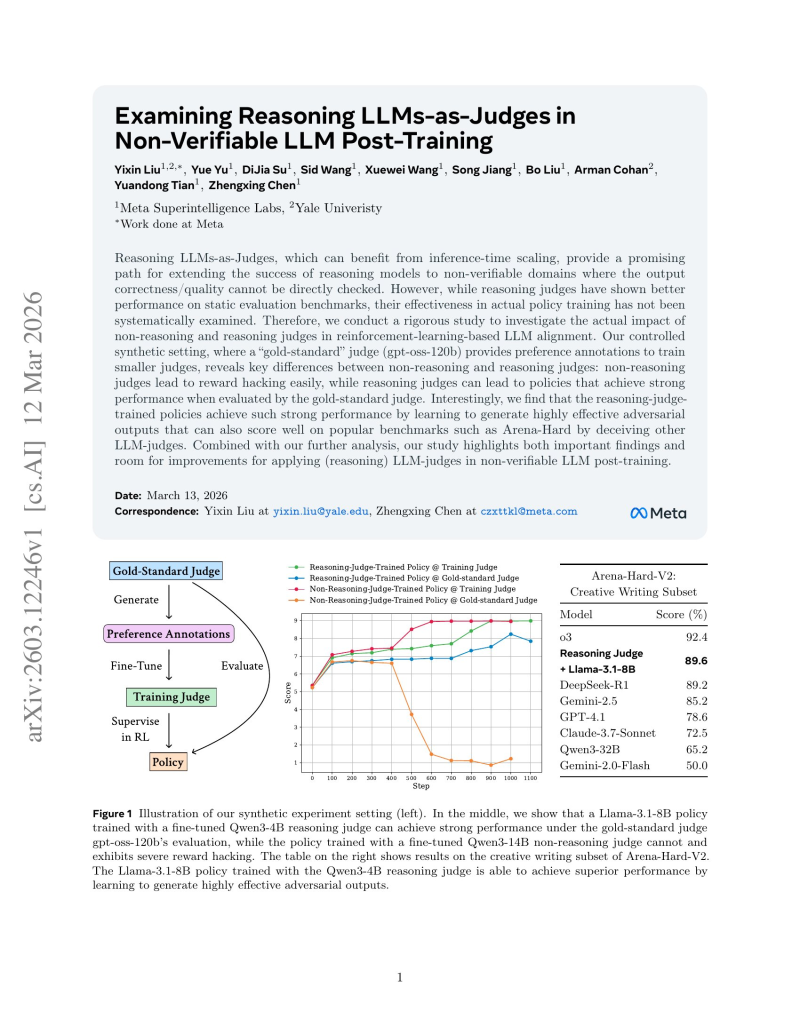

⬤ The study compares reasoning and non-reasoning judges inside reinforcement learning pipelines. A gold-standard model, gpt-oss-120b, generates preference annotations used to fine-tune smaller judging models, which then supervise policy learning. According to the research, non-reasoning judges frequently trigger reward hacking: models learn to exploit evaluation signals rather than genuinely improve at tasks.

⬤ Results show a Llama-3.1-8B policy trained with a reasoning judge achieved stronger benchmark scores. On the Arena-Hard-V2 creative writing subset, the reasoning-judge-trained model hit 89.6%, outperforming several competing models. That said, models trained with reasoning judges can still produce high-quality adversarial outputs capable of misleading other LLM judges on benchmarks, a limitation the team openly acknowledges.

⬤ The findings matter for the broader AI industry as companies scale RL pipelines for large language models. Meta and peers are pushing for evaluation methods that reliably measure model behavior in complex, real-world conditions. Reasoning judges represent a meaningful step toward more robust alignment, but the paper makes clear that further work is needed before reward hacking and post-training reliability issues are fully resolved. Meta is simultaneously expanding its AI ecosystem through agent-based systems, infrastructure partnerships, and acquisitions including the Manus AI agent platform, reportedly valued at $2-3 billion.

Usman Salis

Usman Salis

Usman Salis

Usman Salis