New research from Epoch AI is challenging conventional wisdom about what's really driving AI progress. While many assume breakthrough algorithms are the secret sauce, the data tells a different story - it's the massive scaling of computing power that's amplifying every innovation we make. The findings show efficiency gains that would have seemed impossible just a few years ago, all thanks to throwing more computational muscle at the problem.

How 223% Annual Growth Rate Became Possible

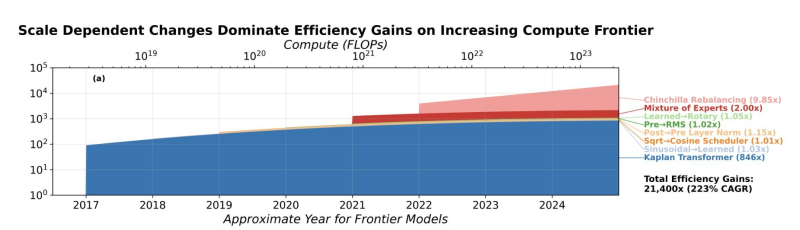

Epoch AI's latest analysis reveals something remarkable: AI training efficiency has hit approximately 21,400× improvement along the compute frontier.

That translates to a compound annual growth rate of 223% in efficiency - numbers that sound almost too good to be true. But here's the kicker: this isn't just about clever coding. Software-driven efficiency alone might be reaching close to 10× per year, though researchers admit there's substantial uncertainty in that estimate.

Accounting only for scale-dependent algorithm changes plus compute scaling can explain roughly 2.23× per year of software improvement.

The research connects to broader acceleration patterns detailed in Epoch AI charts show 36× faster LLM progress in 2025, painting a picture of an industry moving faster than most people realize.

Scale-Dependent Changes Drive Real Innovation

The most interesting insight? Scale-dependent algorithmic changes dominate efficiency improvements as compute increases. Major architectural shifts - moving from LSTM to Transformer models, transitioning from Kaplan scaling to Chinchilla rebalancing, adopting mixture-of-experts approaches - these innovations don't just add incremental gains. When combined with compute scaling, they deliver roughly 2.23× annual software improvement.

This compute amplification dynamic mirrors the infrastructure expansion race described in Google leads with 927 MW as Amazon-backed Anthropic claims second in AI data center power race.

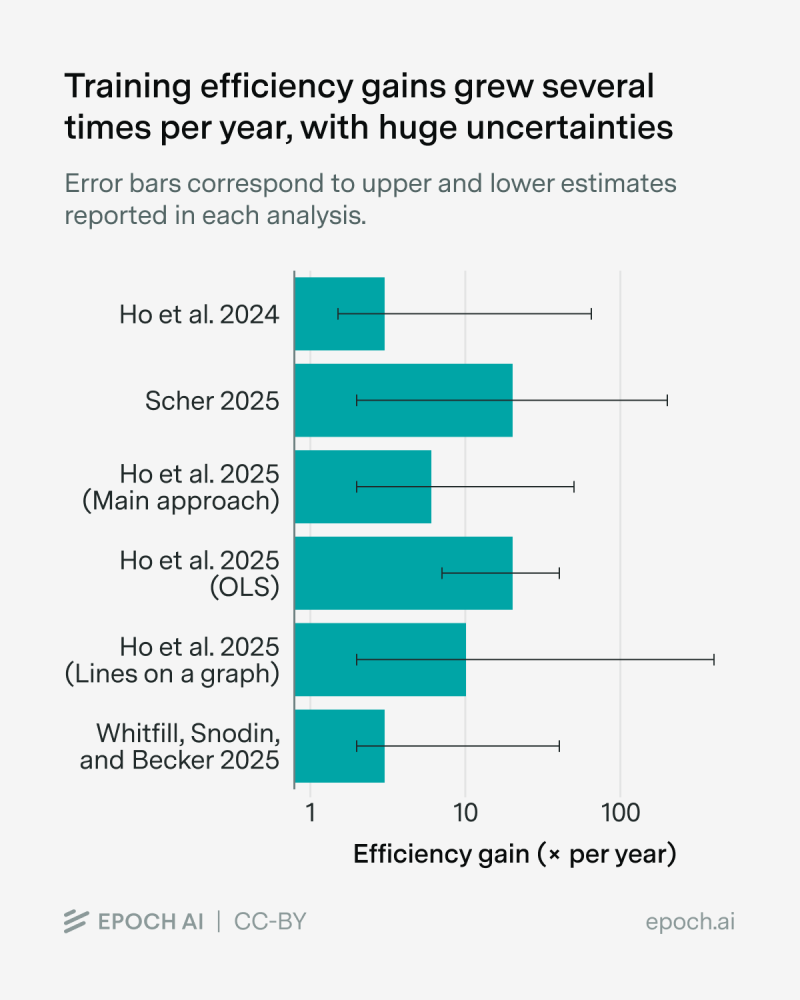

Multiple studies analyzed by Epoch AI - including Ho et al. 2024 and 2025 variants, plus Scher 2025 - show central estimates clustering around several-fold annual improvements, though with wide error bars reflecting different methodologies.

The practical results are already visible in production systems like Qwen3TTS open-source release advances real-time multilingual AI voice, where scaled infrastructure converts research breakthroughs into working technology.

Peter Smith

Peter Smith

Peter Smith

Peter Smith