⬤ Cursor AI has rolled out Instant Grep, a new search system built to scan millions of files in milliseconds. The upgrade directly speeds up how AI agents retrieve relevant code, cutting the lag that slows down complex task execution. For developers working inside large repos, faster retrieval means agents spend less time waiting and more time doing.

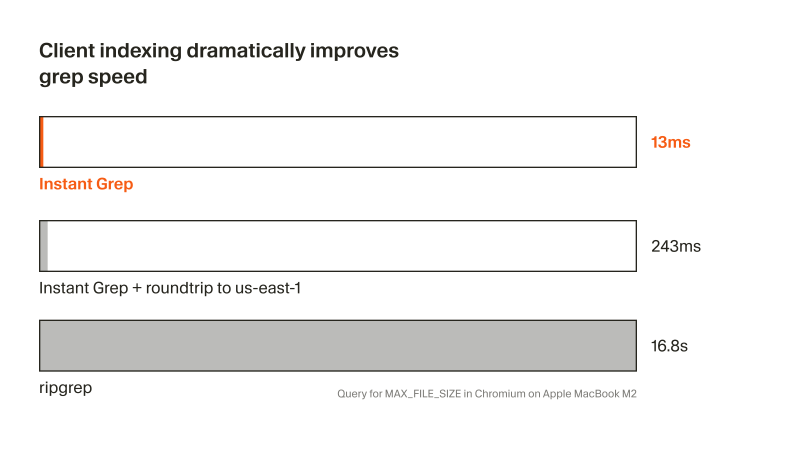

⬤ The benchmark numbers make the gap hard to ignore. Instant Grep returns results in roughly 13 milliseconds on its own; adding a network roundtrip pushes that to about 243 milliseconds. Traditional tools like ripgrep, by comparison, can take up to 16.8 seconds on large codebases. That is not a marginal improvement; it is a different class of performance entirely.

Client-side indexing lets queries run instantly without leaning on external infrastructure- that is the architectural bet Cursor is making.

⬤ Instant Grep is built around local indexing. By keeping index data on the client side, Cursor avoids the latency of round-trips to remote servers. The system applies algorithmic optimizations that prioritize speed and scale, letting it handle queries that would otherwise strain conventional tooling. This fits a pattern of compounding efficiency bets Cursor has been making, including Cursor AI cuts token use 5x with self-summarization RL and advances in Cursor benchmarks GPT-5.4 coding efficiency with 60% score on CursorBench.

⬤ Fast search is not just a quality-of-life upgrade; it changes what AI agents can realistically attempt. When retrieval becomes near-instant, agents can tackle tasks that require scanning broad context without hitting performance walls. Cursor has already reported that Cursor AI agents now handle 30% of merged pull requests inside the company, and tools like Cursor's new agent review launches at $0.040-$0.50 per scan with BugBot-level analysis push that automation further. As codebases grow, the ability to search fast and act immediately will define which AI tools actually scale.

Marina Lyubimova

Marina Lyubimova

Marina Lyubimova

Marina Lyubimova