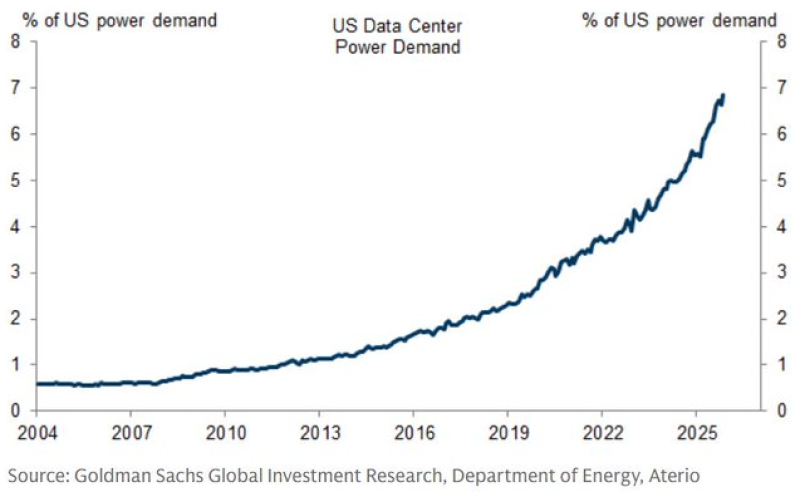

The AI revolution runs on electricity - and it is hungry. Data centers across the United States now account for roughly 7% of total national power consumption, a figure that was barely 0.5% just two decades ago. That tenfold climb is no longer a future concern. It is already reshaping how the country plans and builds its energy grid.

From 0.5% to 7%: How AI Rewired US Energy Demand

For most of the 2000s and early 2010s, data center electricity use grew steadily but stayed well below public radar. The inflection came after 2018, when cloud computing scaled up and AI workloads began demanding serious computational power. US vs China electricity growth and AI infrastructure gap has become one of the defining strategic debates of the decade, with both nations racing to secure power supply for their AI buildouts.

The numbers carry real weight. A shift from 0.5% to 7% of national demand is not incremental - it is structural. Every major model training run, every inference cluster, every hyperscaler expansion adds to a load that grid operators are only beginning to plan around. AI data center power demand growth projections now factor into federal energy policy and utility investment cycles alike.

Why Energy Is Becoming the New Bottleneck for AI

Rising power demand is quietly becoming the primary constraint on AI expansion - more limiting, in some scenarios, than chips or talent. New data center projects face multi-year waits for grid interconnection. Some regions are already pushing back on new construction. The pace at which AI scales will increasingly depend on how fast power infrastructure can follow.

The broader global picture adds further pressure. China solar power expansion and global energy shift illustrates how competitors are moving aggressively to secure renewable capacity for their own AI ambitions. For the US, the 7% figure is not just a data point - it is a signal that energy strategy and AI strategy are now the same conversation.

Marina Lyubimova

Marina Lyubimova

Marina Lyubimova

Marina Lyubimova